Ethics

Law

Responsibility

Projects

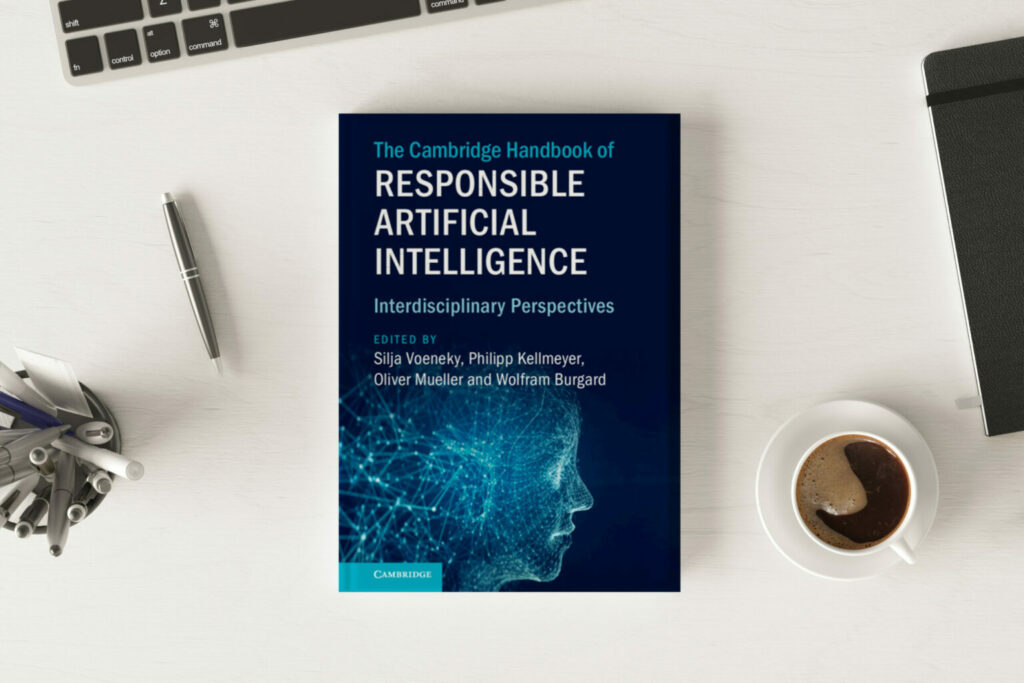

The Cambridge Handbook of Responsible Artificial Intelligence

“The Cambridge Handbook of Responsible Artificial Intelligence – Interdisciplinary Perspectives” will be published in September 2022. It comprises 28 chapters written by participants of our virtual conference “Global Perspectives on Responsible AI”. The book will provide conceptual, technical, ethical, social and legal perspectives on “Responsible AI” and discusses pressing governance challenges for AI and AI systems for the next decade from a global and transdisciplinary perspective.

AI-TRUST

AI Trust develops an ’embedded ethics and law’ approach that aims to investigate the ethical and legal challenges of a deep learning-based assistive system for EEG diagnosis. Throughout the research phase we will jointly develop normative guidance for the development of the system.

RESCALE

RESCALE is a multidisciplinary project on metalearning of assistive robots in which we pursue a design-based approach involving mixed-methods qualitative research.

KIDELIR

KIDELIR is a multidisciplinary project on AI-based decision support for predicting delirium in clinical patients.

News

Philipp Kellmeyer in »rbb24 Inforadio«

Prof. Dr. Philipp Kellmeyer from the Responsible AI team was interviewed on the ethics of neurotechnologies for the German radio programme „rbb24 Inforadio“. Ethik der Neurotechnologien: Gefahren durch die kommerzielle Nutzung? (DE) A computer that recognises thoughts – what sounds like science fiction is increasingly becoming reality. Neurotechnologies are also opening up new avenues in…

Interview Study on Mental Privacy, Neurotechnology and Disability

Understanding the Perspectives of People with Disabilities As part of the PRIVETDIS project, we are conducting a qualitative interview study to explore how people with disabilities perceive mental privacy in the context of neurotechnology. Although neurotechnologies such as brain-computer interfaces and neurostimulation devices are increasingly discussed in ethics and law, the perspectives of potential and…

Silja Vöneky in »Deutschlandfunk«

Prof. Dr Silja Vöneky from the responsible-AI team was interviewed on the role of artificial intelligence for the German radio programme „Deutschlandfunk Kultur“.

Who we are

Leadership

Research-Team

We value networks and the exchange of different disciplines and approaches. This is also reflected in our team and our large project group.

We are located at:

We are supported by: